Students enter college and university from diverse backgrounds and life stages. The concept of a first-year student always being a recent high school graduate is a misnomer; many students come to higher education with significant life experience.

The college/university culture of study is rarely the same as high school, making the transition difficult even for talented students. In many countries, the massification of higher education has opened up education to all, with the only limiting factor being a student’s ability to meet entry requirements and pay for subjects.

Since online education allows for greater lifestyle flexibility, particularly for students with jobs, family commitments, and/or those who live far from a university, many first-time tertiary students are enrolling in online subjects. Such students confront dual issues: learning at the university level and learning in a new environment. Additional stress is placed on these students because of the assumption that they know how to be autonomous learners who can manage their own learning and associated issues within the university setting. The combination of increased expectations and decreased personal contact with faculty and other students creates a complex challenge for academics at universities offering undergraduate degrees online (see articles in this issue by Adam Fenner and Lorena Neria de Girarte).

Even within the context of Seventh-day Adventist institutions that pride themselves on providing a high level of student support, students entering university for the first time often “hit the wall” when they experience difficulties. It matters not if students are traditional or online, they all experience difficulties at some time during their first year.

A virtual mentor program can provide support to all first-year students regardless of whether they are in online, blended, or traditional learning environments. One such initiative, implemented by The University of Newcastle in Newcastle, Australia, and later by Avondale College of Higher Education in Cooranbung, Australia, also supported students in other years of study experiencing difficulties who had been identified as “at risk.”

While the challenges associated with unfamiliarity soon pass for most students, life and study stressors seem to inhibit others from overcoming such difficulties. In addition, even students who appear to be progressing well can experience sickness, bereavement, or psychological issues; difficulties which, if not identified early, may lead to a downward spiral ending with disengagement from their studies. In these worst-case scenarios, students with excellent academic abilities may leave their studies or just “fall between the cracks.” Broadly, these students are referred to as “students at risk” because, for a variety of factors, they are not coping well and are likely to fail or drop out, often without being noticed. Students at risk require additional support, either short-term or for a longer duration. Even if they have ready access to a plethora of student-support services, students at risk often isolate themselves when they experience academic difficulty.

Large university class sizes make it difficult for academic staff, residential staff, advisors, chaplains, and even peers to notice individual students who are struggling. This problem is compounded in online environments. While the broader implications of a failed or missed assignment may go unnoticed by one lecturer, failure or non-submission of assignments across multiple subjects may indicate a student’s need for additional support, and this need may not be discovered until it is too late. Similarly, low levels of student access and/or engagement with a subject site within a Learning Management System (LMS) may signify a student experiencing difficulty with one subject, but low engagement across all LMS subject sites often indicates a student at risk. The cumulative effect surely indicates a problem, yet without the benefit of a LMS or similar tracking system, no single person at a university would be able to identify such a situation and respond before the student had failed multiple subjects or completely disengaged.

In most cases, higher education institutions offer adequate support services to help in these circumstances, but they are not always accessed by students. For students who seek help, support can usually be provided with relative ease, but identifying and providing support to students who isolate themselves remains a complex endeavor. While there is no single correct way to identify and support such students, leveraging the capacity of technology to assist in this process may offer a viable solution.

The Australian Context

Since the 1970s, considerable research has explored the issues of student retention and attrition in higher education. Research has confirmed the adverse impact of students withdrawing from a university before they obtain their degree, evident both in Australia1 and internationally.2 Student attrition not only affects the institution, but also the individual student and his or her family. Current funding models for the Australian higher education sector (HECS; FEE-HELP) and the United States (federal and private student loans) often leave students, regardless of subjects completed, with significant debt. Financial considerations aside, there are many other unfavorable outcomes that could be avoided if retention were managed more efficiently.

Widespread concern has been expressed at the revelation that one-third of all university students contemplate withdrawing during their first year of study.3 Within the context of Australia, the seminal work of McInnis et al.4 is still relevant, as first-year students, according to Krause,5 vacillate among three sometimes competing tensions:

- the relevancy to their livse of the program in which they are enrolled;

- perceptions of themselves as clients (from the marketing and service dimensions of their institution); and

- the disciplinary and academic integrity standards required by academics.

These tensions arguably contribute to students’ withdrawal from a college or university. Several models attempt to explain student retention and attrition, and numerous approaches aimed at reducing attrition have been explored and implemented among first-year university students attending universities in Australia. Strategies include attempts to increase levels of student engagement, the creation of learning communities, and tactics to construct academic and social integration. These strategies have been shown to positively influence student retention.6

The Identified Need

In 2013, the administration of Avondale College of Higher Education (Cooranbong, New South Wales, Australia) conducted a pilot survey assessing all undergraduate students, which revealed that many first-year students were either unaware of or had not used their institution’s support systems (e.g., learning support, counseling, subject-specific support, etc.). Highlighting these facilities and encouraging students to access them was seen as a first step in thwarting attrition and providing additional support to students in need.

Additionally, when large classes are the norm, students experiencing personal issues engage less, rarely ask for personal support, and often withdraw.7 Schools need to develop mechanisms to assist in identifying and supporting such students at risk through guidance, tools, and support as needed. If struggling students are rapidly directed to support services, the rates of withdrawals, failure, and personal distress should decrease.

The benefits of creating an overall atmosphere in which students feel academically and socially connected and sustained have been proved in research. Lizzio8 proposed that a student’s experience of belonging could be created through developing an environment that consists of “five senses of success”: connectedness, capability, resourcefulness, purpose, and culture. The creation of such an environment can positively enhance the experience of first-year students, specifically those who are feeling vulnerable and non-aligned.9 Cementing student connections with academic institutions is vitally important, but academic interventions may be less effective if they lack an accompanying emphasis on the critical first-semester component of social connectedness.10

The Avondale Virtual Mentor Program

All subjects at Avondale College of Higher Education have an online/blended component and use Moodle as the LMS to work with students outside formal classes. To help identify all first-year students who were potentially at risk and offer them assistance, Avondale implemented the Virtual Mentor (VM) program. A part-time VM role was created to track students’ progress and make contact with those who appeared to be experiencing difficulties. The VM reported to the vice president for academics and research.

Students were introduced to the VM on several occasions (including during online and face-to face orientation,lectures, and tutorials) and by different people (including convenors, subject coordinators, administrative staff, and pastoral support staff). They were also informed about the roles and responsibilities of the VM through dedicated Learning Management System (LMS) Webpages.

When students failed an assessment item, or did not engage with LMS activities, the VM contacted them (usually by e-mail, sometimes by phone). After noting the student’s lack of progress, the VM asked questions about a range of issues, including the following:

- whether the student had a particular problem;

- if the student needed extra support from a tutor; and/or

- if the student needed to discuss his or her career choice with a program officer or with Careers Services.

- Where appropriate, students were encouraged to:

- obtain support from the university’s Student Support Services;

- talk to a counselor; and/or

- meet with subject coordinators and/or program convenors (for residential students, the meetings would take place in person; for online students, as teleconferences).

- The VM was tasked with fulfilling the following responsibilities:

- monitoring students’ progress through the use of “Gradebook” (a facility within the institution’s LMS that stores the marks on each assessment item for the subjects in which the students are enrolled);

- monitoring students’ online engagement (LMS statistics allow the VM to identify how often students have accessed the LMS and which options they selected);

- contacting students who have failed an assessment item or have not participated in an online activity;

- maintaining subsequent and regular contact with students at risk;

- tracking students at risk across all their enrolled subjects;

- liaising with subject coordinators and alerting them to student problems;

- keeping records of what occurred;

- analyzing records and providing feedback about trends to faculty and program convenors;

- identifying best practices to support students during their first year at the university;

- facilitating student-staff relationships; and

- raising the visibility of at-risk or failing students with the VM and subsequently with instructors and academic advisors.

The Virtual Mentor’s Activity

The VM maintained a centralized recording system and used a systematic approach to evaluate the components of each subject in which the students were enrolled, including their assessment timetable, discussion boards, etc., and ensured that a plan was developed in consultation with the college administration for all subjects.

The VM collected the following information, the highlights of which are shown in Table 1: (a) number of first-year subjects; (b) overall number of subjects monitored by VM; (c) number of students enrolled in each unit, cohort, and discipline; and (d) number of subjects per discipline.

Student engagement levels were classified using the following Engagement Indicators, with the VM evaluating the students’ LMS engagement across each of these domains to identify students who were potentially at risk:

- Accessed LMS before the end of Week 2;

- Downloaded Unit Outline before the end of Week 2;

- Downloaded Student Information (PDF file including detailed assessment information) before the end of Week 3;

- Accessed News Forum (announcement forum) before the end of Week 3;

- Frequency of accessing the subjects during Weeks 4-6;

- Click count during Weeks 4-6, including simply the number of mouse clicks the student made at the LMS site during that period;

- Submission of Assessment Task 1 (and, if relevant, extension request);

- Submission of Assessment Task 2 (and, if relevant, extension request); and

- Submission of Assessment Task 3 (and, if relevant, extension request).

All VM activity was logged in a spreadsheet to allow correlation of records about individual students across multiple subjects. By monitoring Learning Management System activity, the VM was able to identify students who were performing poorly and at risk for failure.

When students failed to achieve any of the Engagement Indicators, they received a message from the system. The initial e-mail sent to students simply asked, “Is everything OK? It has been noticed you have not accessed your [Engagement Indicator] as yet.” As indicated in Table 2, 80 e-mails were received in response to the more than 1,600 e-mails sent out by either thanking the VM for reminding them, or requesting support or advice.

The VM’s role was not an academic one, but rather to support and objectively advise students or direct them to the support appropriate for their difficulty. Interestingly, while the VM’s contact was sufficient to resolve some students’ issues, many other students did not respond to the message sent by the VM but simply acted upon its content. For those students who needed additional help, the objective advice of the VM proved invaluable.

The fact that the VM could monitor students’ progress regarding the specified Engagement Indicators across subjects was critically important in ensuring rapid identification of students who might be at risk. Overall trends are easily overlooked by academic staff who are unaware of students’ performance in other subjects. Furthermore, Subjects Convenors do not have the capacity to monitor the totality of individual students’ progress in order to identify individual students who may be at risk.

Table 2 outlines the type and range of interactions documented by the VM. These data were drawn from the initial trial of the VM program, which revealed a number of issues relating to the protocols. It should also be noted that the number of personal issues recorded during this trial period was higher than would normally be expected, as two major car accidents involving college students occurred close to assessment dates, one resulting in a fatality. It was interesting to observe that the effects of these incidents were observable by the VM through monitoring all the student LMS engagement in the subjects in which the students involved in the accidents were enrolled.

Insights Into Students’ Responsiveness to Staff Engagement With LMS

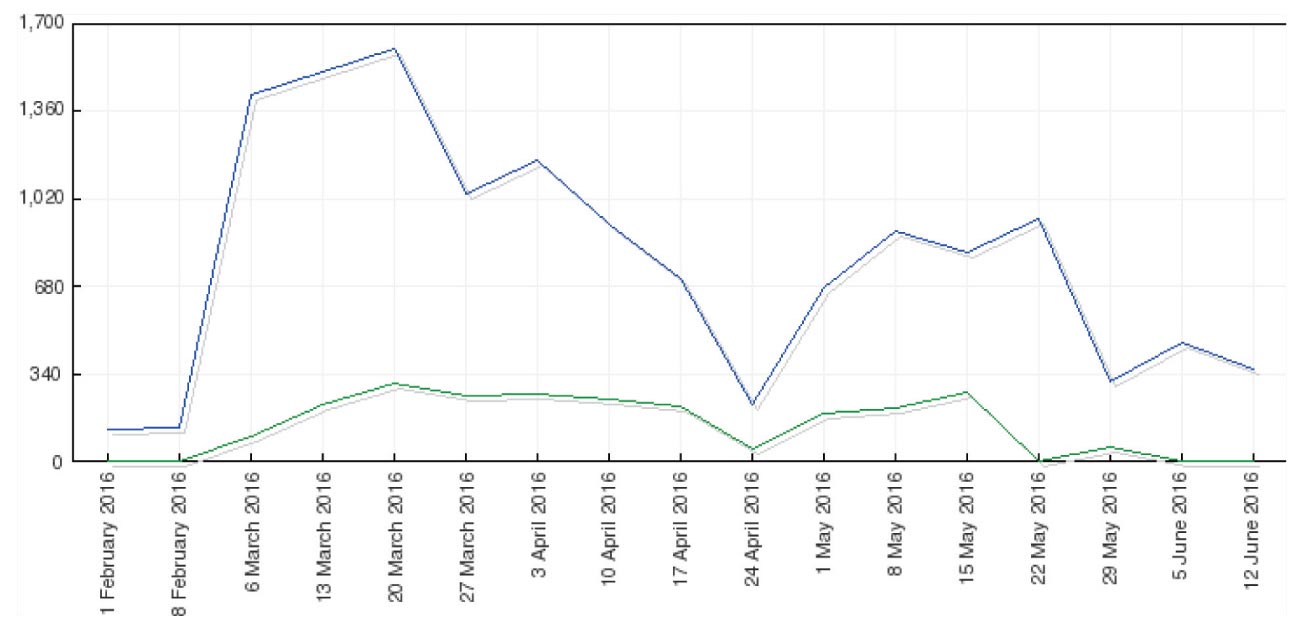

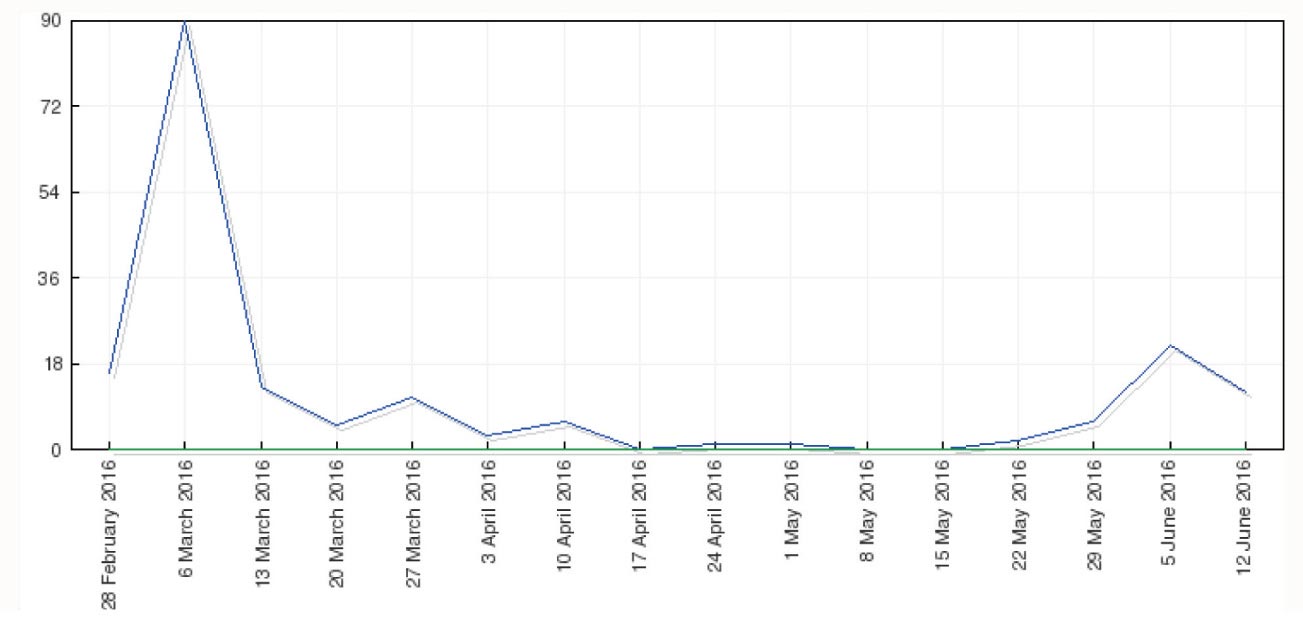

Student LMS activity levels are major indicators of engagement, so Avondale students with a low LMS activity profile were targeted by the VM. For these students, any lack of LMS engagement resulted in a follow-up message from the VM, which reminded them to regularly access and engage with learning material online. Also of interest, a high correlation was found between lecturers’ LMS engagement and the LMS engagement of their students (see Figures 1 and 2). The VM monitored the interaction between students and lecturers as part of following the first-year cohort and students at risk.

Figure 1. Student Engagement (Views) Increased With High Lecturer Engagement (Posts).

Figure 2. Student Engagement (Views) Decreased With Low-No Lecturer Engagement (Posts).

While such correlations are noteworthy, the administrators’ focus remained on monitoring students with low engagement profiles and then following up with such students. Considerable evidence suggested a direct correlation between students’ level of engagement and their final grades.11 found that levels of student activity and engagement in asynchronous online discussions correlated with final subject outcomes, specifically with reference to the domains of active participation (total time in LMS/discussion, frequency of LMS/discussion visit, number of postings), engagement with discussion topics (posting length, discussion time per visit), consistent effort and awareness (regularity of visits and time lapse between visits), and interaction (number of responses triggered by a post, number of replies to received responses). While engagement levels do correlate with success, lack of engagement by students early in a semester does indicate a likelihood that they will experience difficulty in completing a subject.

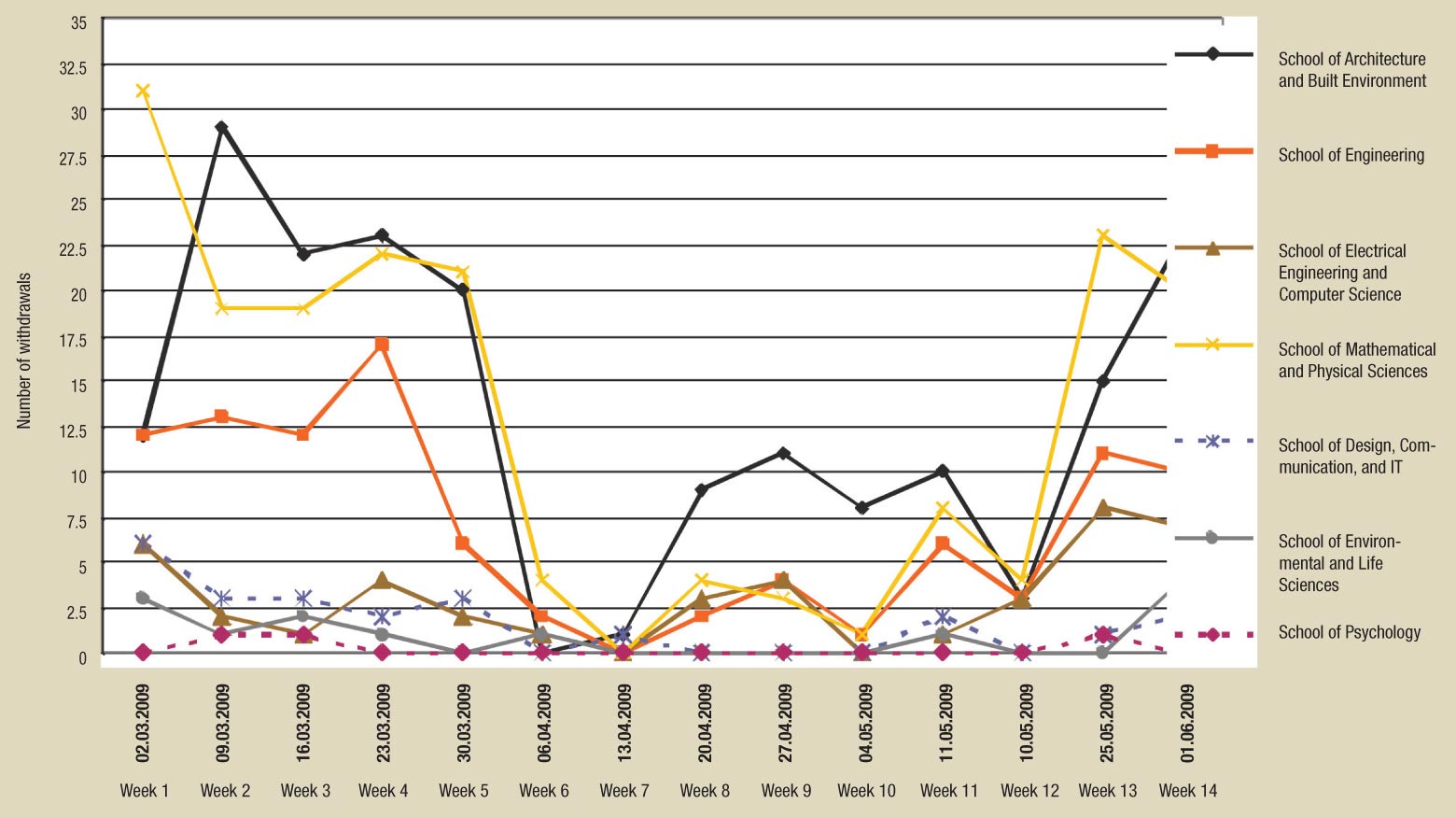

In a previous VM initiative atThe University of Newcastle (Australia), engineering faculty tracked students, both online and traditional, withdrawal from a range of subjects.12 Figure 3 shows the weeks in which withdrawals occurred across a range of subjects in the program. While most withdrawals occurred early in the semester, their frequency clearly accelerated toward the end of the semester and before the cut-off when an academic penalty would be recorded on a student’s academic transcript. Figure 3 also shows that architecture and built-environment students withdrew at a significant rate. Many of the online learners were mature students who were working full time. Difficulties associated with time management are well known in the field and partially explain the trends shown in Figure 3. Surveys have shown that such students are, in the main, unaware of the support services available to them, thus exacerbating the challenges they face.

Figure 3. Number of Withdrawals From Subjects at The University of Newcastle (2006).

It is difficult to predict the full impact of the VM initiative due to the complex and diverse enrollment options available to students, though the number of first-year students having to “Show Cause” for their lack of progress while enrolled in a subject did provide some insight. Primarily in the frequency of “Show Cause,” various students were asked to justify why they should remain enrolled in their selected program, as they had failed many subjects. Table 3 shows that the number of “Show Cause” students remained relatively constant from 2006 to 2008.13 Since the VM project started in 2007, a significant reduction in first-year students categorized as “Show Cause” and “at risk” had been achieved when the initiative was discontinued in 2012. This suggests that after the project was implemented, first-year students were better informed and became more strategically engaged with their studies. As the introduction of the VM initiative was the only change over this period (2006-2008), any successes can appropriately be attributed to the VM program. While success has been observed with first-year students, it appears that upper-division students were not being as strategic, indicating the VM role may need to be expanded.

We have considered the VM program thus far from the perspective of assistance to students experiencing academic difficulty, but there is an extra dimension to the program―the ability to identify at-risk students early and support the ones experiencing non-academic difficulties. In a blended learning and online environment where the breadth of issues can be difficult to establish, the VM initiative was able to observe changes to the engagement profiles of a significant number of students at both The University of Newcastle and Avondale. Follow-up with these students identified the need for additional emotional support following two serious incidents, and the VM was able to direct students to the appropriate services as required. Students do not often self-disclose when they experience difficulties, and the visual cues provided through tracking student-engagement profiles by the VM significantly assisted with identifying these issues.

Conclusion

The VM program has been successfully implemented in two institutions, in total over a nine-year period, at Avondale College and The University of Newcastle, and evidence supports its benefits to students, especially those studying in blended and online environments. Evidence also suggests an increase in students from non-traditional backgrounds studying at universities, specifically in the online environment, during the time the VM program was run at the two institutions.

Often, when confronted with the option of moving their classes online, lecturers claim that they find it difficult to teach students unless they can “look them in the eye.” As more and more students choose to study online, subject designers and teachers will need to develop strategies that effectively support their transition to learning in this environment.

While these strategies need further development, researchers in the field continue to study the significant capacity of LMS analytic systems which, until now, have been underutilized. Systems that record student use of LMSs, through tracking and analysis of online data (analytics), regularly appear with reports of their success in the literature.

Agudo-Peregrina et al. 14 outlined three “system-independent” interaction classifications: those based on the agent (student-student, student-teacher, student-content); those based on frequency of use (Most Used―transmission of content; Moderately Used―discussions; student assessment/evaluation; and Rarely Used―subjects/teacher/satisfaction evaluation surveys, computer-based instruction); and classification based on participation mode (active vs. passive interaction). They evaluated the relationship of each component against academic performance across two different learning modalities (blended learning and online learning), and developed an extraction and reporting plug-in tool for the LMS to automatically classify interactions into the appropriate category.

Results indicated that student academic performance correlated to active interaction across each system classification, but only in purely online learning (not correlated to blended learning). LMS systems thus have a great deal of potential, and as Cerezo et al.15 highlighted, recent advances in the use of educational data mining in LMS can assist, identify, and predict students’ learning styles, effort expended, and learning achievement.

As these two examples show, great potential exists for the application of LMS analytics to enrich initiatives like the VM program. The tasks of writing protocols for the complex analytics that will enhance teaching and ultimately improve the capacity to effectively and successfully support students lies before us as program administrators and researchers. The ability to identify students experiencing difficulty, and simply acknowledge that difficulty by asking, “Is everything okay?” before providing or directing them toward appropriate additional support enables teachers in blended and online environments the capability to almost “look their students in the eye.”

This article has been peer reviewed.

Recommended citation:

Anthony Williams et al., “An Initiative to Support First-year Students and Students at Risk: The Virtual Mentor Program,” The Journal of Adventist Education 80:1 (January-March 2018): 22-29. Available at https://www.journalofadventisteducation.org/en/2018.1.5.

NOTES AND REFERENCES

- Keri-Lee Krause, “The Changing Face of the First Year: Challenges for Policy and Practice in Research-led Universities.” Paper presented at the University of Queensland First-year Experience Workshop, Queensland, Australia, October 31, 2005: https://www.griffith.edu.au/__data/assets/pdf_file/0007/39274/UQKeynote2005.pdf; Craig McInnis et al., “Non-completion in Vocational Education and Training and Higher Education: A Literature Review Commissioned by the Department of Education, Training, and Youth Affairs,” University of Melbourne Centre for the Study of Higher Education (CSHE) (2000).

- Vincent Tinto, “Taking Retention Seriously: Rethinking the First Year of College,” NACADA Journal 19:2 (Fall 1999): 5-9; Mantz Yorke, “The Quality of the Student Experience: What Can Institutions Learn From Data Relating to Non-completion?” Quality in Higher Education 6:1 (April 2000): 61-75.

- More than 4,000 students attending seven universities throughout Australia were surveyed about their attitudes and behavior during at the first year they are enrolled in a university. The study also sought to identify patterns in how the students adjusted to university life and how they rated the quality of their university experience. Researchers sought to identify trends over a five-year period. This trends report is published every five years, with a more recent publication occurring in 2015. See Craig McInnis, Richard James, and Robyn Hartley, Trends in the First-year Experience in Australian Universities (Canberra, Australia: Department of Education, Training, and Youth Affairs, 2000): http://melbournecshe.unimelb.edu.au/__data/assets/pdf_file/0008/1670237/FYE.pdf; Anne Pitkethly and Mike Prosser, “The First Year Experience Project: A Model for University-wide Change,” Higher Education Research and Development 20:2 (July 2001): 185-198.

- McInnis, Trends in First-year Experience in Australian Universities.

- Kerri-Lee Krause et al., The First-year Experience in Australian Universities: Findings From a Decade of National Studies (Barton, Australia: Commonwealth of Australia, Department of Education, Science, and Training, 2005). This report presents findings from a study based on 2,344 individual respondents, and reports changes over a period of 10 years. This trends report is published every five years with a more recent publication occuring in 2015.

- Vincent Tinto, Anne Goodsell-Love, and Pat Russo, “Building Community,” Liberal Education 79:4 (Fall 1993): 16-22; Chun-Mei Zhao and George D. Kuh, “Adding Value: Learning Communities and Student Engagement,” Research in Higher Education 45:2 (March 2004): 115-138.

- Krause et al., The First Year Experience in Australian Universities: Findings From a Decade of National Studies.

- Alf Lizzio, “Designing an Orientation and Transition Strategy for Commencing Students.” Paper presented at Griffith University, Gold Coast, Australia, 2006.

- Ibid.

- Jennifer Masters and Sharn Donnison, “First-year Transition in Teacher Education: The Pod Experience,” Australian Journal of Teacher Education (Online) 35:2 (March 2010): 87.

- Dongho Kim et al., “Toward Evidence-based Learning Analytics: Using Proxy Variables to Improve Asynchronous Online Discussion Environments,” The Internet and Higher Education 30 (July 2016): 30-43.

- Anthony Williams and Willy Sher, “Using Blackboard to Monitor and Support First-year Engineering Students.” Paper presented at the 18th Annual Australasian Association for Engineering Education Conference, Melbourne, Australia, 2007: http://conference.eng.unimelb.edu.au/aaee2007/papers/paper-34.pdf.

- No data was accessed beyond 2008 since the data had been collected to that point to determine viability of the initiative.

- Ángel F. Agudo-Peregrina et al., “Can We Predict Success From Log Data in VLEs? Classification of Interactions for Learning Analytics and Their Relation With Performance in VLE-supported F2F and Online Learning,” Computers in Human Behavior 31 (February 2014): 542-550.

- Rebecca Cerezo et al., “Students῾ LMS Interaction Patterns and Their Relationship With Achievement: A Case Study in Higher Education,” Computers & Education 96 (May 2016): 42-54.